World Data Processing Units (DPUs) Market 2026 Analysis and Forecast to 2035

Executive Summary

The global Data Processing Unit (DPU) market represents a foundational shift in data center and cloud infrastructure architecture. As a specialized processor designed to offload and accelerate network, storage, and security functions from central server CPUs, the DPU is becoming critical for managing escalating data volumes, enhancing security, and improving overall computational efficiency. This report provides a comprehensive analysis of the market landscape as of 2026, examining the technological, economic, and competitive forces shaping its trajectory through 2035.

The market's evolution is driven by the insatiable growth of data-centric workloads, including artificial intelligence, machine learning, and real-time analytics, which are straining traditional server designs. The adoption of DPUs offers a pathway to reclaim CPU cores for primary applications, reduce latency, and create a more secure, programmable infrastructure layer. This transition is moving from early cloud-scale adoption into broader enterprise deployment, signaling a significant and sustained expansion phase.

This analysis dissects the complex supply chain, from leading semiconductor designers to system integrators, and evaluates the pricing and competitive dynamics in a market characterized by rapid innovation. The strategic implications for stakeholders across the technology stack are profound, influencing decisions on hardware procurement, software development, and long-term data center strategy. The outlook to 2035 points toward the DPU becoming a ubiquitous component in modern computing, integral to the next generation of efficient and agile infrastructure.

Market Overview

The Data Processing Unit (DPU) market has emerged from a niche technology into a central pillar of modern data center design. A DPU is a system-on-a-chip (SoC) that typically combines multi-core CPUs, high-performance networking interfaces, and programmable acceleration engines onto a single piece of silicon. Its primary function is to manage data-centric infrastructure tasks—such as network virtualization, storage processing, and security policy enforcement—freeing the host server's primary CPUs to run business applications and revenue-generating workloads.

The market's structure encompasses several key layers: the semiconductor intellectual property (IP) providers, the chip designers and manufacturers, the card or module integrators, and the ultimate end-users in cloud service providers, enterprises, and telecommunications companies. The technology's value proposition is clear in environments where data movement is the bottleneck, not computation itself. By processing data as it enters or leaves the server, DPUs dramatically reduce the load on the main CPU complex, leading to tangible improvements in performance per watt and total cost of ownership.

As of the 2026 analysis period, the market is in a phase of accelerated growth and technological diversification. Initial deployments were heavily concentrated among hyperscale cloud providers seeking maximum efficiency at massive scale. The current trend shows a clear diffusion into enterprise data centers, telecom edge networks, and high-performance computing clusters, driven by the broader industry shift towards disaggregated, composable infrastructure and zero-trust security models.

Demand Drivers and End-Use

Demand for DPUs is not driven by a single factor but by a confluence of macro-technological trends that are collectively redefining computing infrastructure. The exponential growth of data, particularly unstructured data from IoT devices, video streams, and sensor networks, creates immense pressure on server I/O subsystems. Traditional server architectures, where general-purpose CPUs handle both application and infrastructure tasks, are proving inefficient and costly at this scale, creating a compelling economic case for hardware offload via DPUs.

The proliferation of artificial intelligence and machine learning workloads is a paramount driver. These workloads involve moving vast datasets between storage, memory, and processors. DPUs accelerate this data pipeline, reducing the time AI models spend waiting for data and thereby shortening training and inference cycles. Furthermore, the rise of microservices-based, containerized applications in cloud-native environments generates enormous east-west network traffic within data centers, which DPUs are uniquely architected to manage and secure efficiently.

End-use segmentation reveals distinct adoption patterns and requirements:

- Hyperscale Cloud Providers: The pioneering adopters, they deploy DPUs at scale to maximize server utilization, enable advanced multi-tenancy with strong isolation, and offer new "infrastructure-offload-as-a-service" to their customers. Their demand is for highly customized, power-efficient solutions.

- Enterprise Data Centers: Adoption is driven by needs for enhanced security (e.g., embedded firewalling, encryption), improved performance for virtual desktop infrastructure (VDI) and databases, and the operational simplicity of managing software-defined infrastructure.

- Telecommunications & Edge Computing: For 5G core networks and edge deployments, DPUs provide the necessary performance to handle network function virtualization (NFV) and ensure low-latency processing for real-time applications.

- High-Performance Computing (HPC) & Supercomputing: Here, DPUs are valued for accelerating MPI message passing and managing complex storage hierarchies, directly contributing to faster scientific and engineering simulations.

Underpinning all these drivers is the relentless pursuit of efficiency—in energy consumption, capital expenditure, and operational management. As infrastructure becomes increasingly software-defined, the DPU provides the essential hardware anchor to execute these software policies at line rate, making it a cornerstone of the modern, agile data center.

Supply and Production

The supply landscape for DPUs is characterized by a mix of established semiconductor giants, well-funded startups, and strategic partnerships across the technology ecosystem. Production involves a sophisticated chain, beginning with the licensing of core intellectual property. Key IP includes CPU architectures (both Arm and x86), high-speed SerDes for networking interfaces, and various acceleration engine designs. This IP is integrated by chip designers into a final DPU SoC blueprint.

The actual manufacturing of these advanced SoCs is dominated by leading-edge foundries utilizing sub-7nm process technologies. This reliance on cutting-edge semiconductor fabrication plants (fabs) introduces considerations around production capacity, geopolitical factors affecting supply chains, and the significant capital investment required. After fabrication, the silicon dies are packaged and typically mounted onto a PCIe card or a custom module, which includes memory, networking ports (often 25/100/200 GbE or higher), and management controllers. This card-level integration is a critical step that adds significant value and differentiation.

Several distinct business models coexist in the market. Some players follow a traditional semiconductor model, selling DPU chips or cards to server original equipment manufacturers (OEMs) and cloud providers. Others, particularly those with cloud origins, design chips for their own internal consumption, optimizing them precisely for their unique software stack and operational needs. A third model involves offering a full-stack solution, combining DPU hardware with a comprehensive software platform for infrastructure management, security, and storage. This vertical integration allows for tighter optimization but also shapes competitive dynamics and market accessibility.

Trade and Logistics

The global trade of DPUs and DPU-equipped systems is intertwined with the broader semiconductor and server supply chains, which are among the most complex and globally distributed. The physical journey of a DPU typically begins at a foundry in East Asia, after which the packaged chips are shipped to module assembly and test facilities, often located in regions with established electronics manufacturing ecosystems. The final integration onto server motherboards or as add-in cards occurs at OEM facilities, which may be geographically dispersed to serve key markets efficiently.

Logistics for these high-value, sensitive electronic components require stringent controls. Shipments must manage electrostatic discharge (ESD) risks, maintain specific environmental conditions, and ensure high security due to the strategic nature of the technology. The just-in-time manufacturing models prevalent in the server industry mean that inventory is often held in transit or at strategic hubs, making the logistics network resilient and responsive to demand fluctuations from major cloud and enterprise customers.

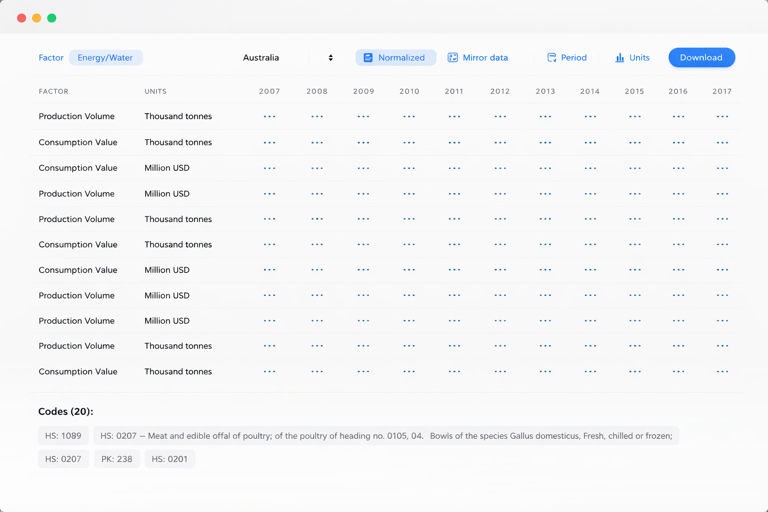

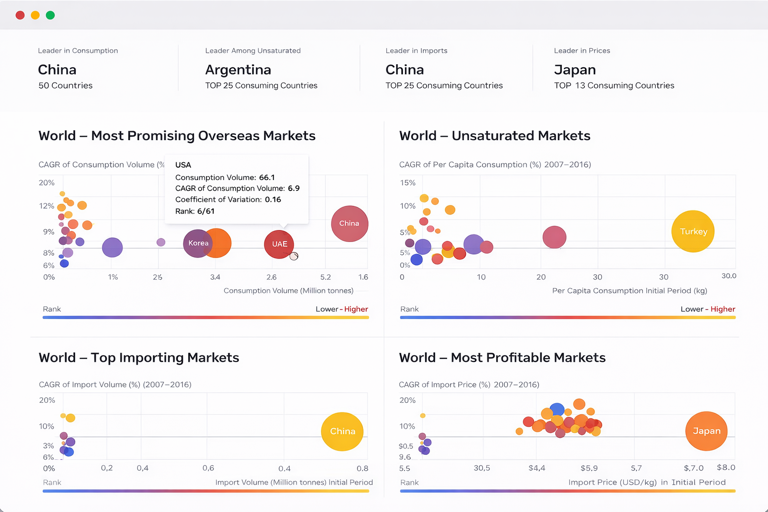

Trade dynamics are influenced by several factors. Firstly, the classification of DPUs under harmonized system codes can affect tariffs and export controls, particularly given their potential dual-use (commercial and potential national security) applications. Secondly, geopolitical tensions can lead to regionalization efforts, where supply chains are reconfigured to mitigate risk, potentially leading to duplicate manufacturing capacity in different geographic blocs. Finally, the end-user's location—whether a server is destined for a data center in North America, Europe, or Asia-Pacific—determines the final leg of trade, involving customs clearance and final logistics to the deployment site.

Price Dynamics

Pricing in the DPU market is not monolithic and varies significantly based on product tier, integration level, and sales volume. At the chip level, prices are influenced by the cost of advanced semiconductor manufacturing, the licensing fees for proprietary IP (especially CPU cores), and the bill of materials for the SoC. Higher core counts, more advanced networking interfaces (e.g., 400 GbE), and larger amounts of on-chip memory or acceleration engines command a premium. However, economies of scale are powerful; a chip ordered in the millions by a hyperscaler will have a vastly different unit cost than one ordered in the thousands by an enterprise OEM.

The price to the end-user is more commonly seen at the card or system level. A DPU add-in card sold through retail channels carries a markup that includes the chip cost, board manufacturing, memory, connectors, cooling solutions, and the profit margin for the supplier. When a DPU is integrated into a server by an OEM, its cost is bundled into the total system price, often positioned as enabling a higher-tier, more performant, and efficient server SKU. In such cases, the pricing is less transparent and is justified by the total system benefits—increased virtual machine density, lower software licensing fees for CPU cores, and reduced energy consumption.

Several factors exert downward and upward pressure on prices. Downward pressure comes from technological maturation, increased competition as more players enter the market, and the natural decline in semiconductor manufacturing costs over time (following Moore's Law trends, though with diminishing returns). Upward pressure stems from the inclusion of more advanced features (e.g., integrated AI accelerators, post-quantum cryptography engines), supply chain constraints for specific components, and inflationary pressures on logistics and raw materials. The long-term price trajectory is expected to follow a pattern common to disruptive technologies: initial premium pricing for early adopters, followed by a decline as volume increases and standardization occurs, though with sustained premiums for cutting-edge, feature-rich models.

Competitive Landscape

The competitive arena for DPUs is dynamic and features several categories of players, each with distinct strategies and advantages. The landscape can be segmented into dedicated semiconductor vendors, vertically integrated cloud providers, and infrastructure software companies expanding into hardware.

- Dedicated Semiconductor Vendors: These are established companies with deep expertise in networking, storage, or general-purpose processors that have pivoted or expanded into DPUs. Their strengths lie in broad sales channels, longstanding relationships with OEMs, and extensive validation ecosystems. They often offer a range of products from performance-optimized to cost-sensitive models.

- Vertically Integrated Cloud Providers: Several hyperscale cloud operators have developed their own custom DPU silicon. Their competitive advantage is unparalleled scale and the ability to co-design hardware and software perfectly for their specific workloads and operational models. While initially for internal use, this capability allows them to optimize their infrastructure costs aggressively and can influence open-source software standards.

- Specialized Startups & Pure-Plays: A number of well-funded startups have emerged focusing exclusively on DPU technology. Their approach is often to innovate rapidly on architecture or software programmability, offering a highly differentiated solution. They compete by being more agile and focused than larger incumbents, though they face challenges in scaling manufacturing and building global sales support.

Competition revolves around several key axes: raw performance (throughput, latency); power efficiency; the richness and openness of the accompanying software development kit (SDK) and ecosystem; security features; and the strength of partnerships with major server OEMs, independent software vendors (ISVs), and cloud platforms. The market is currently in a phase where architectural differentiation is significant, but over the forecast period to 2035, a degree of standardization around programming models and interoperability is likely to occur, which will shift competitive emphasis towards software, services, and ecosystem breadth.

Methodology and Data Notes

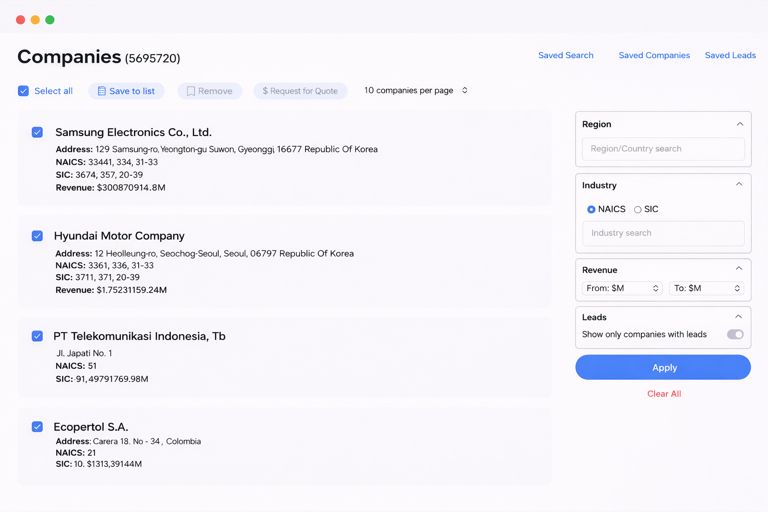

This report is built upon a multi-faceted research methodology designed to ensure analytical rigor, accuracy, and actionable insight. The foundation is a combination of primary and secondary research, synthesized through a consistent analytical framework. Primary research involved structured interviews and surveys with key industry stakeholders, including DPU chip architects, product managers at leading semiconductor firms, infrastructure engineers at cloud and enterprise data centers, and procurement specialists at server OEMs. These discussions provided ground-level perspective on adoption challenges, technical requirements, and purchasing criteria.

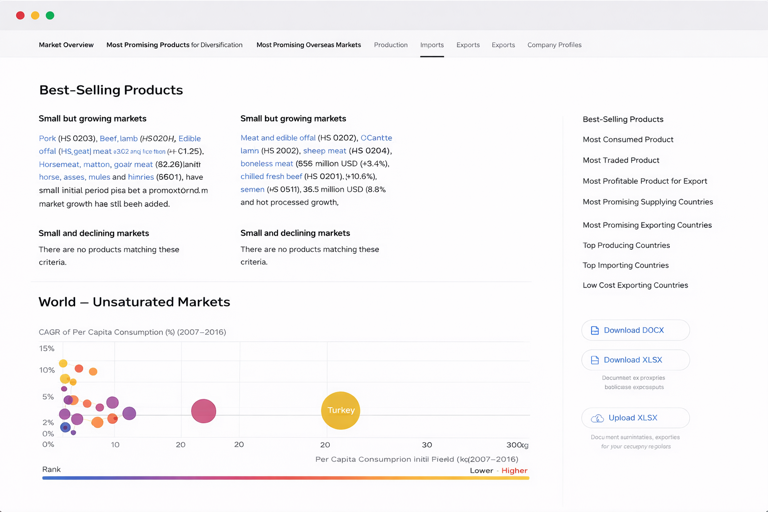

Secondary research comprised an exhaustive review of publicly available information, including company financial reports, product announcements, technical white papers, patent filings, and transcripts from industry conferences. Market sizing and trend analysis were conducted using a bottom-up approach, modeling demand from key end-use segments and cross-validating with supply-side production estimates and channel feedback. Financial analysis examined the public financial statements of relevant publicly traded companies to understand revenue trends, R&D investment levels, and profitability metrics in related segments.

All quantitative data presented, including market size figures, are derived from this synthesized research process. The report adheres to a strict policy regarding absolute numbers: only figures that have been directly obtained from official financial disclosures, regulatory filings, or through validated market research consensus are cited as absolute values. Relative metrics, such as growth rates, market shares, and rankings, are analytical inferences based on the aggregation and triangulation of all available qualitative and quantitative information. The forecast projections to 2035 are based on the extrapolation of identified drivers, constraints, and technology adoption curves, and are presented as directional trends rather than invented absolute figures.

Outlook and Implications

The trajectory of the DPU market from 2026 to 2035 points toward its evolution from an advantageous accelerator to an indispensable component of mainstream data center infrastructure. The fundamental drivers of data growth, architectural disaggregation, and the need for hardware-enforced security are long-term secular trends, not transient fads. This ensures a sustained and expanding addressable market. Technological advancement will focus on greater integration, with future DPUs likely to absorb more functions from adjacent chips (like storage controllers and SmartNICs) and incorporate dedicated accelerators for emerging workloads such as confidential computing and in-network computing.

For technology vendors, the implications are strategic and far-reaching. Semiconductor companies must decide whether to compete in the DPU space directly, partner deeply with DPU providers, or focus on enabling technologies. Success will depend not just on silicon performance but on cultivating a vibrant software ecosystem. Server OEMs face the challenge of integrating DPUs into their product lines in a way that delivers clear, measurable value to customers, moving beyond feature-checkboxes to truly re-architected system designs. Their role as system integrators and validators will become even more critical.

For end-user organizations, the rise of the DPU necessitates a shift in both procurement strategy and IT skill sets. Infrastructure teams will need to develop competencies in managing a heterogeneous compute environment where workloads are dynamically placed on CPUs, GPUs, and DPUs based on their specific requirements. The economic justification will increasingly be a total lifecycle calculation, factoring in savings from software licensing, energy, and data center real estate. Furthermore, the security paradigm will shift, with a greater emphasis on hardware-rooted trust and zero-trust architectures embedded within the infrastructure itself, enabled by the programmable, isolated nature of the DPU.

In conclusion, the DPU market is at an inflection point. The analysis to 2035 suggests a future where the DPU ceases to be a discrete product category and instead becomes a fundamental architectural element, as standard as a network interface card is today. Its integration will be key to realizing the next leaps in computational efficiency, agility, and security, making it a critical area of focus for any organization whose competitiveness depends on the power and resilience of its digital infrastructure.